emmtrix

Edge AI Compiler

(eAI)

High-Performance AI Compilation for Embedded Targets

The emmtrix Edge AI Compiler enables seamless deployment of machine learning models on embedded systems by automatically transforming ONNX and PyTorch models into highly optimized C code. Starting from a generic representation, the compiler applies advanced code transformations to tailor execution for the target hardware, significantly improving efficiency and performance.

By combining techniques such as kernel fusion, loop optimization, memory footprint reduction, and hardware-aware acceleration (including SIMD and dedicated accelerators), the emmtrix Edge AI Compiler ensures that AI models run reliably and efficiently even on resource-constrained edge devices.

Request your live demo today:

Prefer to talk?

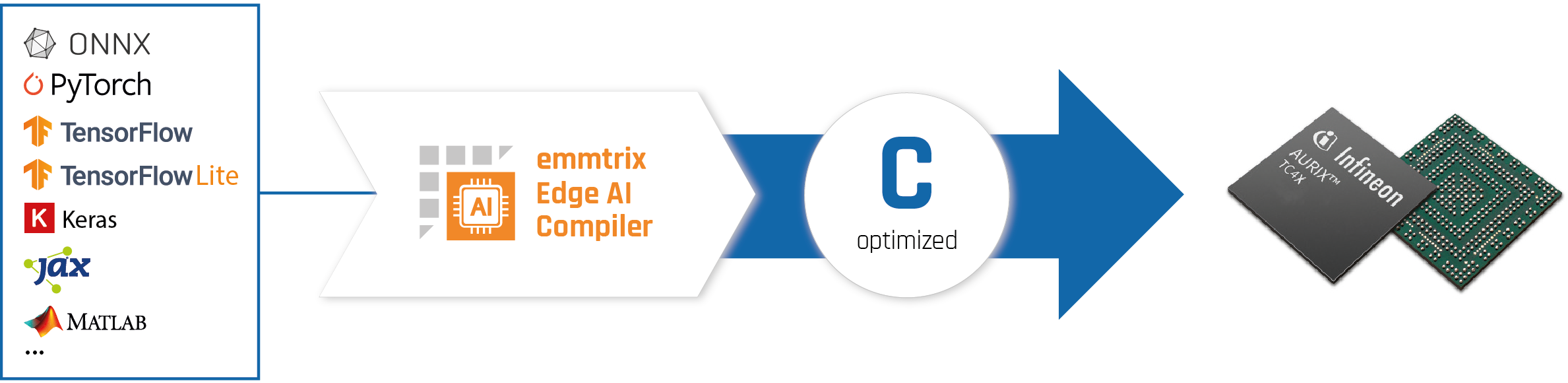

AI Workflow: From ML Model to Optimized Embedded Code

Figure 1: emmtrix Edge AI Copiler Workflow

The emmtrix Edge AI Compiler follows a structured, multi-stage compilation pipeline to transform high-level machine learning models into efficient embedded code.

In the first step, models in ONNX or PyTorch format are translated into portable C code using the open-source tools emx-onnx-cgen and emx-pytorch-cgen. This intermediate representation serves as the foundation for subsequent optimizations.

A series of systematic code transformations is then applied to improve execution efficiency and reduce resource usage. These include, among others, elimination of temporary variables, pointer resolution, constant propagation, replacement of known mathematical functions, and comprehensive loop optimizations such as normalization, fusion, and simplification of control structures.

Based on this optimized representation, the backend generates target-specific C code. It leverages hardware-aware techniques, including the use of intrinsics, to fully exploit the capabilities of the underlying processor or accelerator.

The resulting code can then be compiled with the standard C toolchain of the target platform, enabling seamless integration into existing embedded software environments.

Features

- Automatic Model Conversion: Seamless translation of ONNX and PyTorch models into portable C code.

- Advanced Code Transformation Pipeline: Systematic application of optimizations such as constant propagation, dead code elimination, and pointer resolution.

- Loop Optimization and Kernel Fusion: Improved execution efficiency through loop normalization, fusion, and simplified control flow.

- Memory Footprint Reduction: Minimization of memory usage by eliminating temporary variables and optimizing data access patterns.

- Hardware-Aware Code Generation: Generation of target-specific C code leveraging intrinsics to utilize SIMD units and hardware accelerators.

- Deterministic Execution: Predictable runtime behavior suitable for real-time and safety-critical embedded systems.

Your Benefits

- Reduced Time-to-Deployment

- Improved Runtime Performance

- Lower Memory Consumption

- Consistent and Reproducible Results

- Portability Across Platforms

- Better Utilization of Hardware Capabilities

- Increased Reliability

Supported Platforms

The tool supports vectorization for the next-generation of Infineon 32-bit TriCore™ AURIX™ TC4x MCUs, MPUs featuring ARM Cortex A cores with Neon or Scalable Vector Extension (SVE) instruction sets, x86 processors with the Advanced Vector Extensions (AVX) instruction set, as well as microprocessors implementing the RISC-V “V” Vector Extension (RVV).

Our generic solution allows us to support additional architecture with ease. Please contact us if required.

Dive deeper: Learn how eCV enables efficient vectorization for RISC-V RVV, powered by the official RISC-V Vector C Intrinsics v1.0.

AURIX™

(TC4x PPU)

Associated Partners

emmtrix Technologies is an Infineon Associated Partner with over 10 years of experience working with the Infineon AURIX™ microcontroller family and has been actively collaborating with Infineon for the past five years.

Supported Compilers

There is no standard on how vector instructions are programmed, making it crucial for any vectorization tool to be adaptable. The emmtrix Edge AI Compiler can be easily adapted to work with any C programming model, providing flexibility across a range of platforms and compilers.

There are three main vector programming models in the C language:

- Inline Assembly: This approach involves using inline assembly within the C code, offering fine control over hardware but requiring deep knowledge of the processor’s architecture.

- Platform-Specific Intrinsics (__builtin functions): These are special functions provided by the compiler to leverage specific hardware features. They provide a more abstracted way to access vector units compared to inline assembly, but still require knowledge of platform-specific details.

- Compiler-Specific Vector C Extension + Platform-Specific Intrinsics: This approach uses compiler-specific extensions to the C language, such as vector data types and overloaded operators, for easier programming, portability, and readability of the vectorized code. However, since the vector C extensions only cover the fundamental features of the vector units, they are combined with platform-specific intrinsics to achieve a balance between portability and performance optimization.

The emmtrix Edge AI Compiler supports all three programming models and typically uses the most abstract model to provide easy readability of the generated code.

Technical Documentation

For technical documentation on supported platforms, compilers, and tool internals, visit our developer wiki.

Let's Get in Touch

We believe that the best solutions start with a conversation. Whether you are exploring static code analysis, performance estimation, or code optimization – we’re here to help you reach your goals faster and more efficiently.